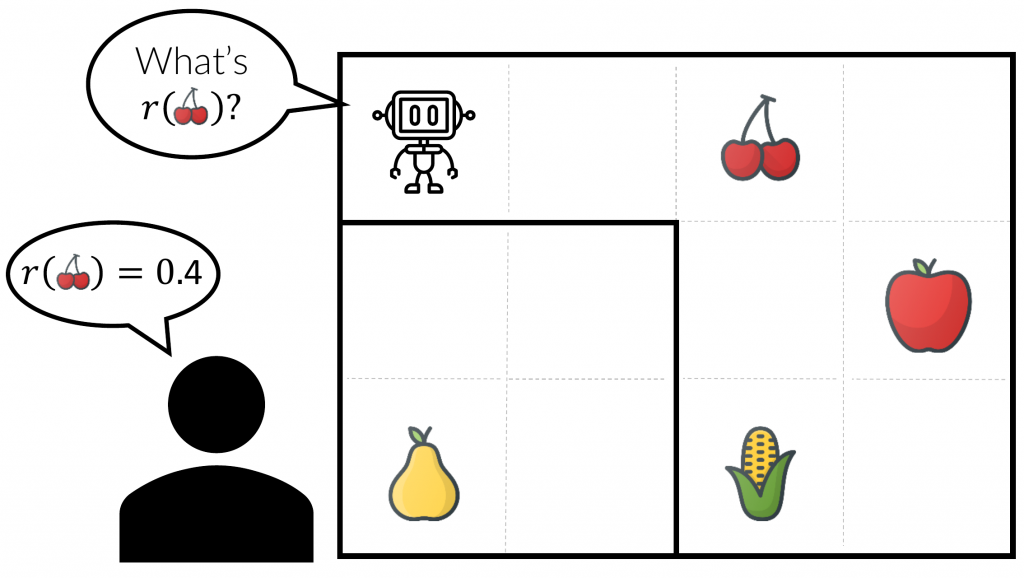

Reinforcement learning from human feedback relies on reward models trained from preference data. But collecting high-quality preference labels is expensive, and reward models trained on limited data can be unreliable. Our two projects address these connected problems from complementary angles.

ActiveUltraFeedback introduces a modular active learning pipeline that uses uncertainty-aware reward estimates to select informative response pairs, reducing the amount of preference data needed for strong downstream performance by 3-6x.

RewardUQ complements this by studying how to measure and compare uncertainty in reward models, providing a unified framework for evaluating accuracy and calibration.

Together, these works show how uncertainty can be used both as a practical signal for efficient preference data generation and as a diagnostic tool for reliable reward modelling.